Dr. Yu-Hsuan Huang and his team at the National Taiwan University saw a need for augmented reality applications in remote-collaborative settings when they undertook their research and development of Scope+. Scope+ is a stereoscopic video see-through augmented reality microscope. Dr. Huang is an ophthalmologist by training in addition to being a doctoral student in computer science. Dr. Huang describes Scope+ as the first AR application in the microscopic world. Scope+ was developed to meet the needs of training and educating new eye doctors in highly motion and movement sensitive procedures involving the human eye.

The primary motivation behind Scope+ was the fact that existing systems for surgical training focus on open abdomen, heart and orthopedic procedures. These applications while very important for their respective disciplines are not easily transferable into the realm of microsurgery. Scope+ addresses the training and education needs for various fields that depend on micro surgery such as cosmetics, the brain and, of course, the eyes.

System Design

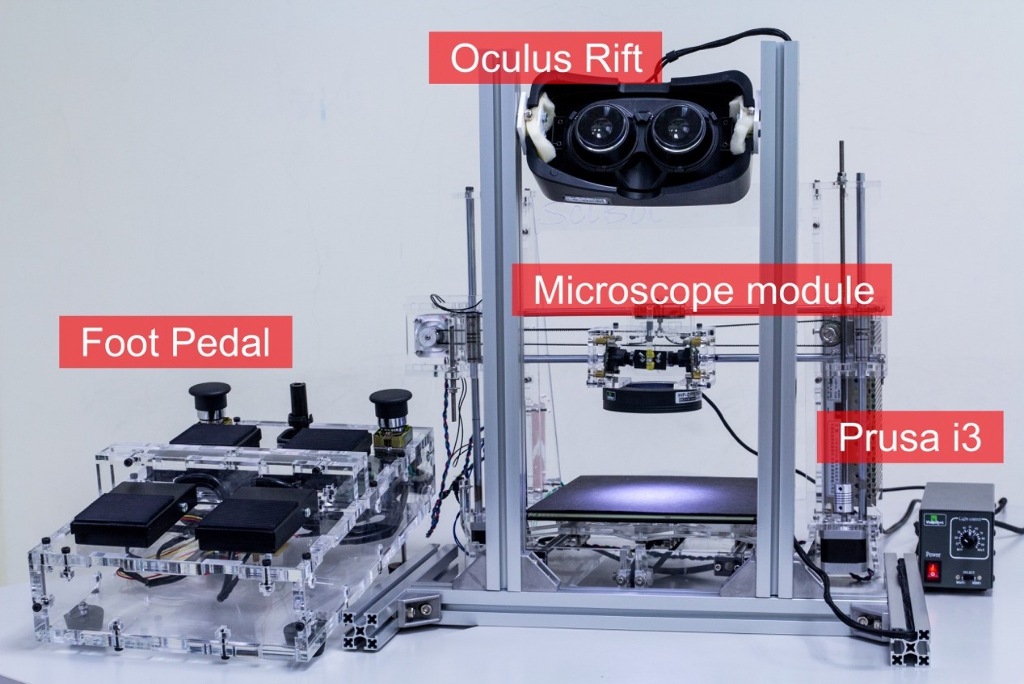

The system design of the Scope+ integrates tools and equipment used in microsurgery by combining them with VR and AR technologies. An Oculus Rift HMD (head-mounted display) and a modified Prusa i3 3D printer (SciBot) are the main components of the system. A specially designed stereo microscope module replaces the extruder of a 3D printer. When a user observes something through the HMD, the module provides stereo vision. A joystick was remodeled into an integrated foot pedal controller in the Scope+ design. The foot pedal controller is a commonly found device in the field of microsurgery, especially in eye surgery applications. Users of the Scope+ system can translate the observation field, magnify the target, change the position of the focal plane, adjust the intensity of the light source and traverse through a sample array while concentrating their hands on the subject that they are observing and interacting. Two high resolution (1600 × 1200 pixels) industrial cameras and two mutually perpendicular reflection mirrors are assembled to build the microscope module compatible with Prusa i3. The working distance of the camera and interpupillary distance (distance between the center of the pupils of the two eyes) are adjustable based on an individual needs as a result of an algorithm to maintain comfortable stereo vision.

While the system design of Scope+ is for a user who is looking into the microscope and pedals, the system promotes an important collaborative element for remote work and training. One of the biggest issues when working remotely, especially when working with visual artifacts such as video and/or graphics is latency. If the latency between users is too great then real-time simulations of actual procedures cannot be tested and practiced. Scope+ overcomes this issue by incorporating GPU acceleration pipeline and multithreaded rendering to reduce the latency and keep high a frame rate (30 frames per second) even with dual full HD resolution cameras. While there is latency, it is minimized and, more importantly, the remote users experience it together, therefore causing a feeling of not experiencing it at all.

Virtual training for real-life applications

In order to practice their surgical techniques, surgeons who operate on eyes would traditionally have to rely on physical objects as substitutes for real human eyes. In existing practices, animal eyes (such as pig eyes), fabricated silicon eyes and head models containing eyes in the eye sockets are used for training new(er) surgeons. The problems with the animal and model eyes, according to Dr. Huang, is that they are not real or accurate when compared to the human eye. Also, preparation is a challenge as the animal or model eyes need to be procured and/or made. Their shelf life is very short as they can only be used once. Another issue, especially when working with animal eyes is the fact that blood and other liquids tend to leak out when undertaking the procedure. With Scope+, the biggest fluid to be concerned about is the users sweat when they are working and concentrating on an important task of micro proportions.

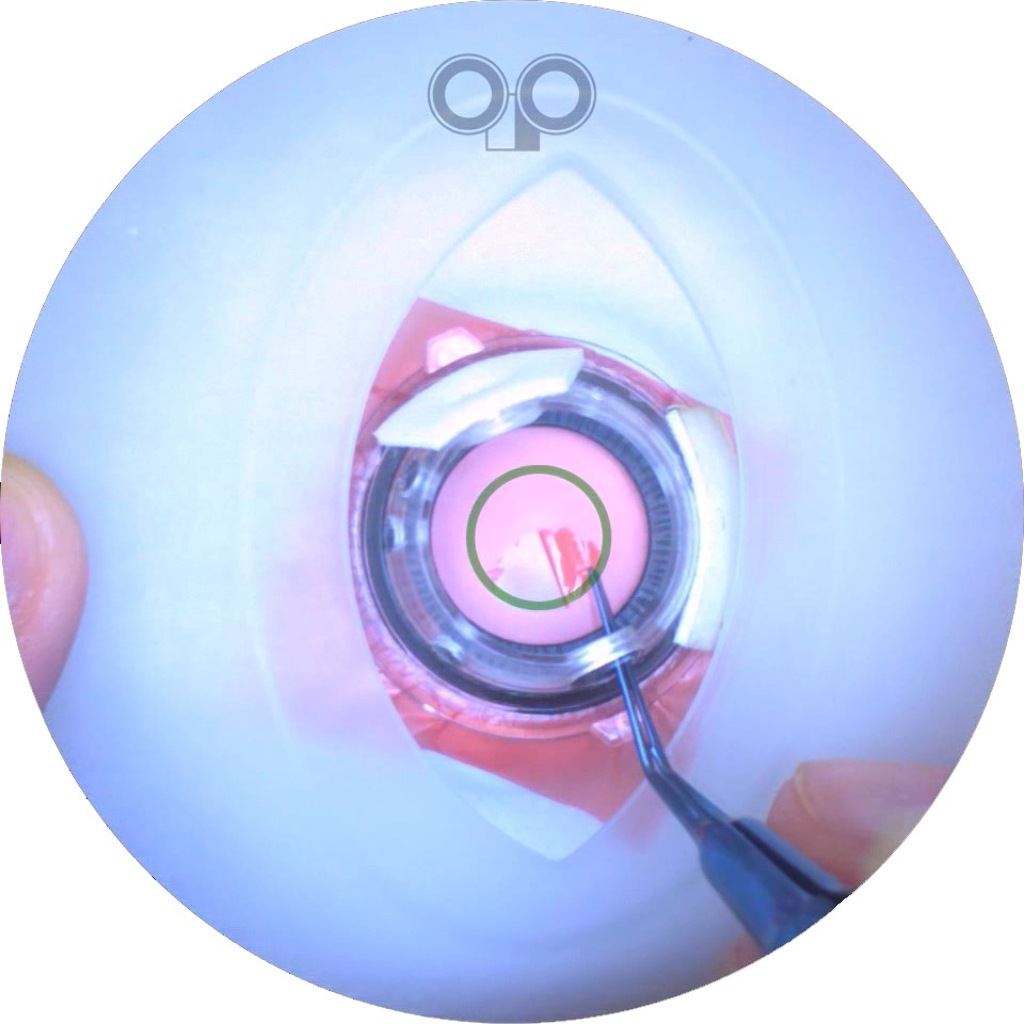

Besides reducing costs and avoid messy cleanup situations, the Scope+ system offers users more scope in the types of simulations and training they can do. Not only is the system infinitely re-usable as a resource, but the system can also provide and create special situations that surgeons may encounter in actual procedures. For example certain types of ocular procedures involve creating a circular hole on top of the eye lens. If this is not done correctly, the whole lens may rupture and that would be disastrous. By practicing over and over with the assistance of a trainer who is in the proximity of the user or geographically very far away, Scope+ can help eye surgeons lower risk and avoid dangerous outcomes.

When using the system, the AR markers act as guidelines for the user. Also, the user can literally keep their eyes on the subject. The AR layer can provide information through gestures that would normally involve physically removing one’s eye(s) from the microscope and finding the information in text or digitally. Moreover, as the system is a mockup of actual microsurgery settings, the same tools and instruments used in actual surgical procedures are used during training exercises. Scope+ is ideally suited to microsurgery training as microsurgery depends on visual rather than haptic and or tactile feedback. A user working with Scope+ does not need to wear any special device or garment that gives force feedback, a lab coat should suffice.

Applications

There are numerous real-world applications applications in both the JackIn Head systems and Scope+. The offline version of JackIn head has already been tested and used for gathering footage for advertising/commercial purposes. The researchers believe and hope that it can be used more in certain sports and extreme sports applications. With the 2020 Summer Olympics scheduled to be held in Tokyo, the Japanese researchers are in a uniquely advantageous position. People may be able to experience stabilized 360-degree footage of certain sports and exhibition events in the very near future.

The online version of JackIn Head, in its current iteration, can provide a stabilized modified first person pov in more than 30 fps. Further research aims to make the system more portable and provide higher resolution. The potential applications are numerous. The system could be greatly beneficial in disaster areas where experts can assist those already on the ground. Long-distance vocational training could move from a 2 dimensional plane as both the instructor and learner can work together next to one another.

The Scope+ system, which will be integrated into to ophthalmological residency program at National Taiwan University. Will allow new doctors (and not-so-new doctors) the opportunity to hone their skills and avoid mistakes during actual surgeries. Outside of the medical field, the system can be adapted for use in the biological sciences by adding details to dissections. Also, in keeping up with the needs of modern day curricula, electronic component assembly can be practiced over and over without having to buy and throw out electronic boards after they have been used.

Both JackIn Head and Scope+ show the true potential for VR and AR in practical applications. While there are still limitations with respect to resolution and frame rate, these challenges can be overcome as the necessary technologies advance and reach a friendlier consumer price-point. Both these systems prove that seeing is not only believing, but that seeing is also doing.